April 18, 2026 — The headlines this week told a story that’s impossible to ignore. OpenAI is committing over $20 billion to Cerebras-powered server capacity. The European Commission just awarded a €180 million sovereign cloud contract to reduce dependency on American tech giants. Meta is raising the price of its Quest headsets because AI data centers are consuming so much memory that consumer hardware costs are surging. And Amazon acquired Globalstar for $11.57 billion to build a satellite internet network that can underpin always-on AI connectivity from orbit.

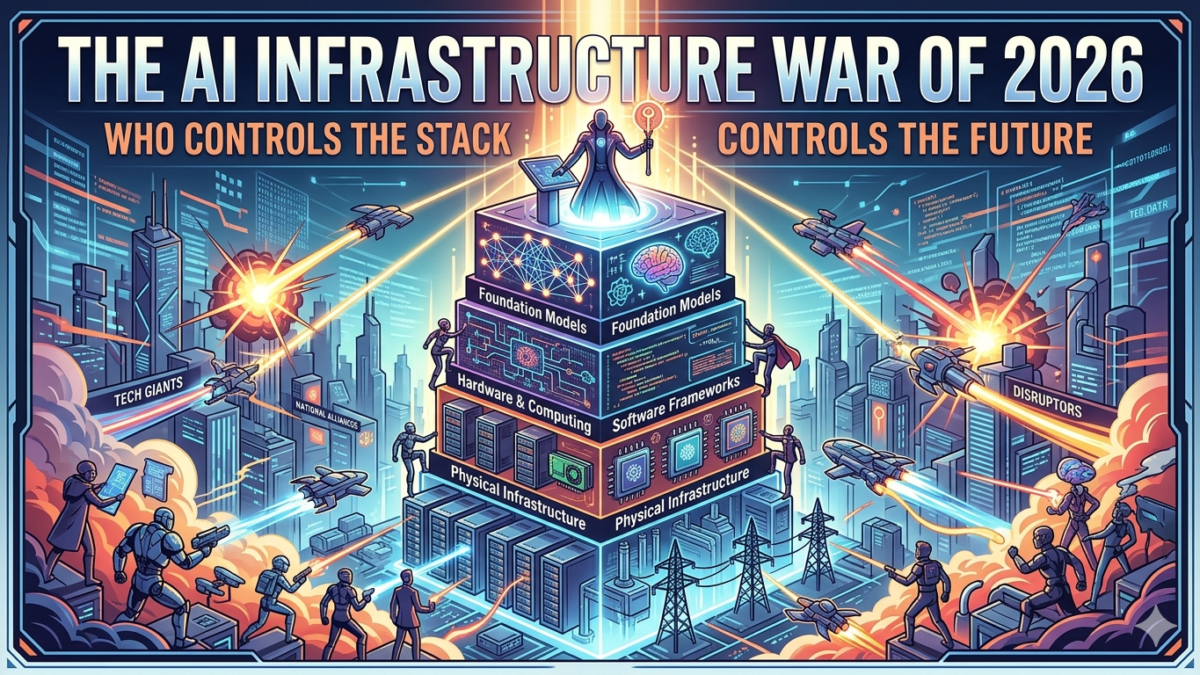

Strip away the individual company names, and one pattern emerges with striking clarity: the AI race of 2026 isn’t primarily a competition between models. It’s a war over infrastructure.

The Stack Beneath the Intelligence

For the past three years, the public narrative around artificial intelligence has centered on benchmarks, chatbots, and the question of which lab would build the first truly general intelligence. Those conversations still matter — but they increasingly feel like a distraction from the more consequential battle happening at the infrastructure layer.

Consider what’s actually being competed over right now:

- Compute: OpenAI’s reported $20 billion Cerebras commitment signals that model leadership is now inseparable from owning or controlling massive compute reserves. Nvidia remains the dominant supplier, but the scramble to build alternatives — from Meta’s custom silicon to South Korea’s DeepX eyeing an IPO — shows how strategically critical chip independence has become.

- Power: America’s largest utilities are planning a historic $1.4 trillion spending cycle, driven almost entirely by AI data center demand. More than half of planned U.S. data center builds have already been delayed or canceled due to power constraints and supply shortages. In this environment, the companies that secure reliable electricity aren’t just cutting costs — they’re acquiring a competitive moat that may prove more durable than any model architecture.

- Connectivity: Amazon’s acquisition of Globalstar, rebranding its satellite internet ambitions under “Amazon Leo,” is a direct challenge to SpaceX’s Starlink. The subtext is unmistakable: if AI workloads require always-on connectivity, whoever controls orbital infrastructure controls a critical chokepoint of the AI economy.

- Sovereignty: Europe’s €180 million sovereign cloud award is not a procurement footnote. It is a government making a strategic declaration: that AI infrastructure is national capacity, not a vendor relationship. Expect more governments worldwide to follow.

The Consumer Caught in the Crossfire

One underappreciated consequence of the infrastructure arms race is that its costs are already flowing downstream to ordinary consumers. Meta’s price hikes on Quest VR headsets — driven explicitly by memory chip shortages caused by AI data center demand — are an early sign of what happens when hyperscaler appetites compete with consumer hardware supply chains.

The same dynamic is playing out across laptops, gaming PCs, and enterprise networking equipment. The billions being poured into training clusters and inference farms are bidding up the components that go into everyday devices. For the next two to three years, consumers and businesses outside the AI infrastructure race will increasingly feel like they’re paying a tax imposed by it.

The Workforce Caught in the Transition

Snap’s announcement this week of roughly 1,000 layoffs — representing 16% of its workforce — with explicit attribution to AI-driven efficiency gains is a milestone that deserves careful reading. Snap isn’t a struggling startup cutting costs to survive. It is a profitable, product-focused company making a calculated bet that AI can do at scale what human teams currently do at greater expense.

Snap will not be the last. The companies most exposed aren’t necessarily the most obvious ones. The efficiency gains from AI agents in software development, content moderation, ad operations, and customer support are now measurable enough that CFOs can model them. What was previously a speculative argument — “AI will replace X jobs” — is increasingly a spreadsheet exercise with defensible numbers attached.

The honest framing isn’t that AI is destroying jobs. It’s that AI is compressing the labor required to reach the same business outcomes, which means some organizations will need fewer people and others will redirect those people toward higher-leverage work. Which outcome predominates will depend heavily on whether companies invest their AI-driven savings into growth or return them to shareholders.

The Geopolitical Dimension Is No Longer Optional Reading

One of the most important trends of April 2026 is how thoroughly geopolitics has become inseparable from technology strategy. AI startup acquisitions are now subject to national security review in ways that were unimaginable five years ago. Chip export controls are shaping which AI ecosystems can scale in which geographies. Sovereign cloud mandates are fragmenting what was once imagined as a unified global internet into regional infrastructure zones.

For founders and enterprise technology leaders, this means the era of pure product-market-fit thinking — where the best technology wins regardless of origin — is definitively over. Where your infrastructure is hosted, who manufactures your chips, and which government has jurisdiction over your data are now first-order strategic decisions, not compliance afterthoughts.

The Physical AI Breakthrough Nobody Is Talking About Enough

Forrester’s newly released Top 10 Emerging Technologies for 2026 report identifies a pivot that deserves more mainstream attention: AI is moving decisively from digital workflows into physical environments. Robots, vehicles, and ambient computing systems are becoming the next major deployment surface for AI capabilities.

The bottleneck, as it turns out, isn’t hardware or software — it’s wireless infrastructure. The convergence of 6G development and robotics is creating a new engineering discipline focused on designing connectivity infrastructure that can support real-time AI inference at the physical edge. The companies and countries that get this right will have a significant advantage in manufacturing, logistics, healthcare, and defense over the next decade.

What This Moment Requires

The technology industry has a habit of cycling between periods of software abundance — where the bottleneck is ideas and talent — and periods of infrastructure scarcity, where the bottleneck is physical capital. We are clearly in the latter phase now, and the decisions being made about infrastructure in 2026 will shape the competitive landscape for the next ten to fifteen years.

For enterprises: the question is no longer whether to adopt AI but whether your infrastructure strategy is robust enough to support AI at the scale your competitors will eventually deploy it. The companies treating AI infrastructure as a cost center rather than a strategic asset are setting themselves up for a painful reckoning.

For policymakers: the infrastructure war has produced a genuinely urgent set of questions about who should own the foundational layers of AI — and the window to shape those answers with real policy tools is narrowing faster than most legislators seem to appreciate.

For everyone else: the AI boom is no longer primarily a story about clever software. It is a story about who builds and controls the physical systems — chips, power grids, data centers, satellites — that intelligence runs on. That’s the story worth watching.

TechReview.trade covers emerging technology, AI infrastructure, and the global digital economy. This article was published on April 18, 2026.